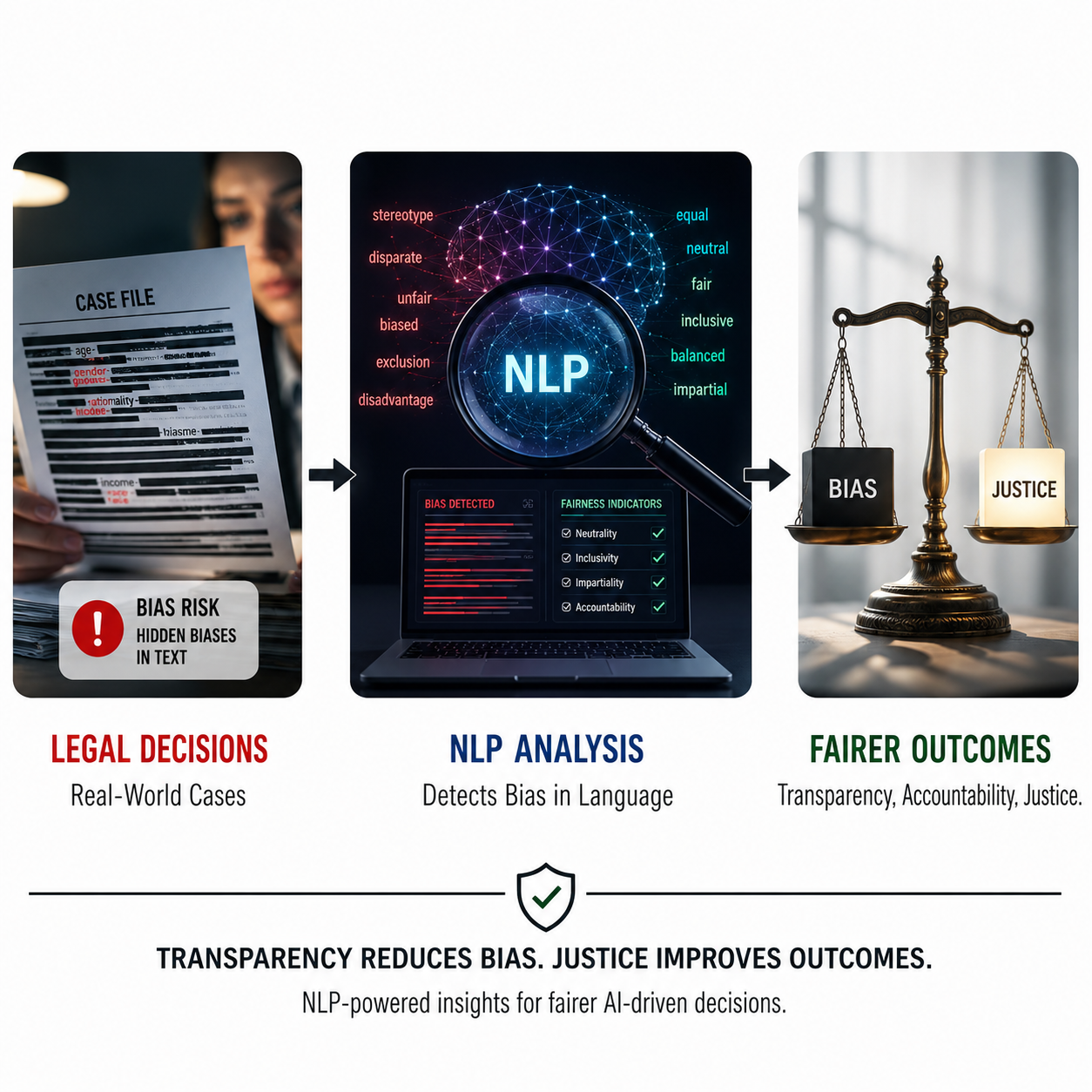

Algorithmic Bias and Justice in AI Systems: A Data-Driven NLP Analysis of Decision-Making Frameworks in Global Governance

Abstract

The integration of artificial intelligence (AI) into decision-making systems has intensified concerns about algorithmic bias and its implications for fairness in global governance. Despite growing interest in AI ethics, empirical evidence based on real-world legal data remains limited. This study develops a data-driven framework to analyze bias in AI-assisted legal and administrative decisions using Natural Language Processing (NLP). A dataset of 420 legal cases across five continents (2018–2025) was examined using lexical and semantic bias indicators, fairness scores, and governance variables such as transparency and accountability. The methodology combines computational text analysis (SpaCy, NLTK) with statistical modeling, including correlation and regression, supported by expert validation. Results reveal a significant inverse relationship between bias and fairness, as well as a moderating effect of transparency, which reduces the impact of bias. AI involvement is found to amplify existing structural biases under low-transparency conditions. The findings demonstrate that algorithmic bias is a governance-dependent phenomenon and provide a scalable framework for improving fairness in AI-driven decision systems.Downloads

Published papers are the exclusive responsibility of their authors and do not necessary reflect the opinions of the editorial committee.

IJMSOR respects the moral rights of its authors, whom must cede the editorial committee the patrimonial rights of the published material. In turn, the authors inform that the current work is unpublished and has not been previously published.

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivs 3.0 Unported License.