Higher Education and Predictive Analytics: Assessing Student Performance with Artificial Intelligence

Abstract

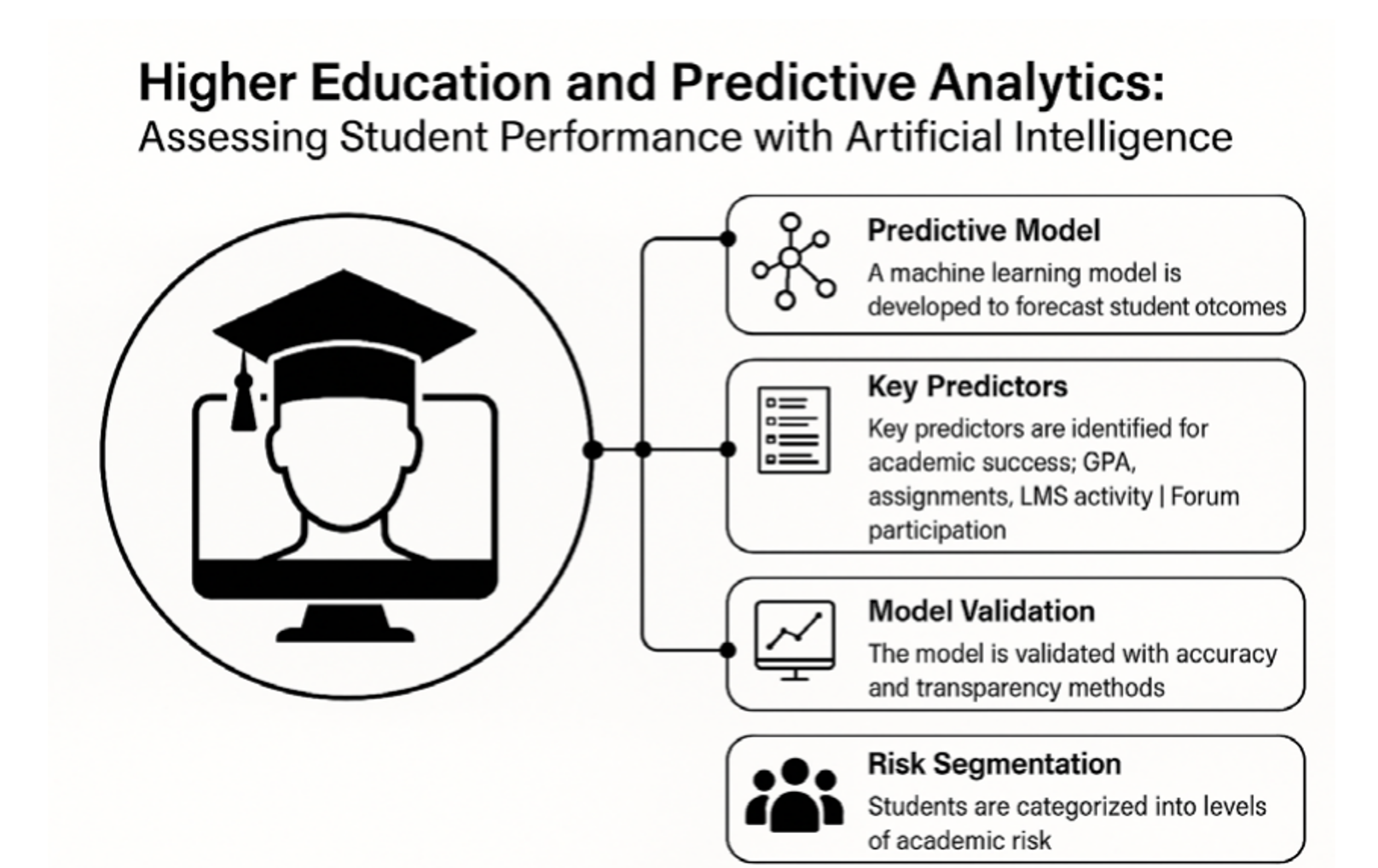

Student retention and academic performance remain persistent challenges in higher education systems, particularly in data-constrained and high-uncertainty environments; however, there is limited empirical evidence on how explainable artificial intelligence models can simultaneously predict and interpret academic outcomes at scale. This study develops and validates a predictive analytics model using machine learning techniques applied to an anonymized dataset of 5,500 university students. A Random Forest classifier was trained and evaluated using accuracy, recall, specificity, and AUC-ROC metrics, achieving an accuracy of 87% and an AUC of 0.91, demonstrating high predictive performance. Key predictors included historical GPA, assignment submission rates, LMS access frequency, and forum participation. To address model transparency, SHAP (Shapley Additive Explanations) analysis was implemented, enabling the identification of both the magnitude and direction of each variable’s influence on predictions. Additionally, the student population was segmented into three academic risk groups (high: 19%, medium: 33.9%, low: 47.1%), revealing distinct behavioral and performance patterns. These findings demonstrate that explainable AI not only enhances prediction accuracy but also provides actionable insights for early intervention and personalized academic support. This study contributes empirical and methodological advances by integrating predictive performance with interpretability, offering a scalable framework for data-driven decision-making in higher education systems.Downloads

References

Ahmed, S., Rehman, M., & Khan, M. A. (2024). Analyzing students' academic performance using educational data mining techniques. Computers and Education: Artificial Intelligence, 5(1), 100167. https://doi.org/10.1016/j.caeai.2023.100167

Albrechtsen, D., Conati, C., & Roy, M. (2021). Predicting academic success using behavioral data in online learning environments. Journal of Educational Data Mining, 13(2), 27–48. https://doi.org/10.5281/zenodo.4774707

Aljohani, N. R., Memon, M. H., Alshamrani, Y., & Tariq, M. (2023). Accurate, timely, and portable: Course-agnostic early prediction of student performance. Computers and Education: Artificial Intelligence, 4, 100145. https://doi.org/10.1016/j.caeai.2023.100145

Baker, R. S. (2010). Data mining for education. In B. McGaw, E. Baker, & P. Peterson (Eds.), International Encyclopedia of Education (Vol. 7, pp. 112–118). Elsevier. https://doi.org/10.1016/B978-0-08-044894-7.01223-8

Baker, R. S. J. d. (2010). Data mining for education. International Encyclopedia of Education, 7, 112–118. https://doi.org/10.1016/B978-0-08-044894-7.01223-8

Baker, R. S., & Inventado, P. S. (2014). Educational data mining and learning analytics. In J. A. Larusson & B. White (Eds.), Learning Analytics (pp. 61–75). Springer. https://doi.org/10.1007/978-1-4614-3305-7_4

Baker, R. S., & Inventado, P. S. (2014). Educational data mining and learning analytics. In J. A. Larusson & B. White (Eds.), Learning Analytics: From Research to Practice (pp. 61–75). Springer. https://doi.org/10.1007/978-1-4614-3305-7_4

Cabrera, E., Páez, D., & González, C. (2021). Análisis predictivo en educación: una revisión desde el machine learning. Revista Iberoamericana de Tecnología Educativa, 11(1), 56–70.

Ifenthaler, D., & Yau, J. Y. K. (2023). Utilising learning analytics for study success. Computers in Human Behavior

Kaur, H., Sharma, P., & Singh, M. (2023). Student course grade prediction using the random forest algorithm. Education and Information Technologies, 28(1), 123–139. https://doi.org/10.1007/s10639-023-11300-1

Kotsiantis, S., Pierrakeas, C., & Pintelas, P. (2015). A review on predicting student's performance using data mining techniques. Procedia Computer Science, 1(3), 111–120. https://doi.org/10.1016/j.procs.2010.12.015

Lakkaraju, H., Aguiar, E., Shan, C., Miller, D., Bhanpuri, N., Ghani, R., & Addison, K. (2015). A machine learning framework to identify students at risk of adverse academic outcomes. Proceedings of the 21th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 1909–1918. https://doi.org/10.1145/2783258.2788620

Moher, D., Liberati, A., Tetzlaff, J., & Altman, D. G. (2009). Preferred Reporting Items for Systematic Reviews and Meta-Analyses: The PRISMA Statement. PLoS Medicine, 6(7), e1000097. https://doi.org/10.1371/journal.pmed.1000097

Romero, C., & Ventura, S. (2020). Educational data mining and learning analytics: An updated survey. Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery, 10(3), e1355. https://doi.org/10.1002/widm.1355

Sweeney, M., Lester, J., & Rangwala, H. (2021). Students matter the most in learning analytics: The effects of internal and instructional factors on academic performance. Computers & Education, 170, 104225. https://doi.org/10.1016/j.compedu.2021.104225

Tempelaar, D. T., Rienties, B., & Nguyen, Q. (2023). Learning analytics and predictive modeling in higher education. Computers & Education

Viberg, O., Hatakka, M., & Bälter, O. (2023). Learning analytics in higher education: A review. Educational Technology Research and Development

Zhou, M., Zhang, M., & Huang, Y. (2020). Predicting student performance with data-driven features in blended learning environments. Computers in Human Behavior, 105, 106215. https://doi.org/10.1016/j.chb.2019.106215

Published papers are the exclusive responsibility of their authors and do not necessary reflect the opinions of the editorial committee.

IJMSOR respects the moral rights of its authors, whom must cede the editorial committee the patrimonial rights of the published material. In turn, the authors inform that the current work is unpublished and has not been previously published.

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivs 3.0 Unported License.